TL;DR

- Root cause analysis (RCA) is not the same as incident investigation. RCA is the disciplined process of tracing an event backward through its causal chain until you reach the systemic failure that allowed every downstream error to happen — and then fixing that.

- Most organizations stop too early. They find the immediate cause (“the worker slipped”) and call it done. Real RCA digs until it hits the management system failure — inadequate training, missing procedure, broken supervision, flawed design.

- Five proven methods dominate field practice: The 5 Whys, Fishbone (Ishikawa) Diagram, Fault Tree Analysis, Barrier Analysis, and TapRooT® — each suited to different incident complexity levels.

- The quality of your corrective actions depends entirely on the depth of your analysis. Shallow RCA produces “retrain the worker” recommendations. Deep RCA produces system-level changes that prevent recurrence across the entire operation.

- Every near-miss deserves the same analytical rigor as a fatality. The causal chains are identical — only the outcome differs.

I was sitting across the table from a plant manager in a refinery on the Gulf coast, reviewing the investigation file for a flash fire that had hospitalized two operators three weeks earlier. The file was forty pages thick — witness statements, photos, timeline reconstruction, gas test records. Impressive documentation. But when I turned to the corrective actions page, there were exactly two entries: “Retrain operators on hot work procedures” and “Issue safety stand-down.” I closed the file and asked one question — “Why did the atmospheric monitoring fail before the hot work permit was issued?” The room went quiet. Nobody had asked that. Nobody had traced the event past the operator who struck the ignition source. The investigation had found a person to blame. It had not found the reason the system let two workers walk into an explosive atmosphere.

That refinery experience captures the single most common failure in incident investigation across every industry I’ve worked in over the past decade. Organizations conduct investigations. They rarely conduct root cause analysis. The difference is not academic — it is the difference between an incident that repeats in six months and one that never happens again. Root cause analysis is the structured, evidence-based process of tracing an incident through its entire causal chain until you identify the fundamental system-level failure that, if corrected, eliminates the conditions for recurrence. This article breaks down what RCA actually is, the methods that work in the field, where most teams go wrong, and how to build corrective actions that hold.

What Is Root Cause Analysis in Occupational Health and Safety?

Root cause analysis is a systematic investigation methodology used to identify the deepest underlying cause — or causes — of an incident, near-miss, or undesirable event. It moves beyond surface-level observations (“the scaffold collapsed”) to uncover why conditions existed that made the collapse possible, why those conditions were not detected, and why the management system failed to prevent them.

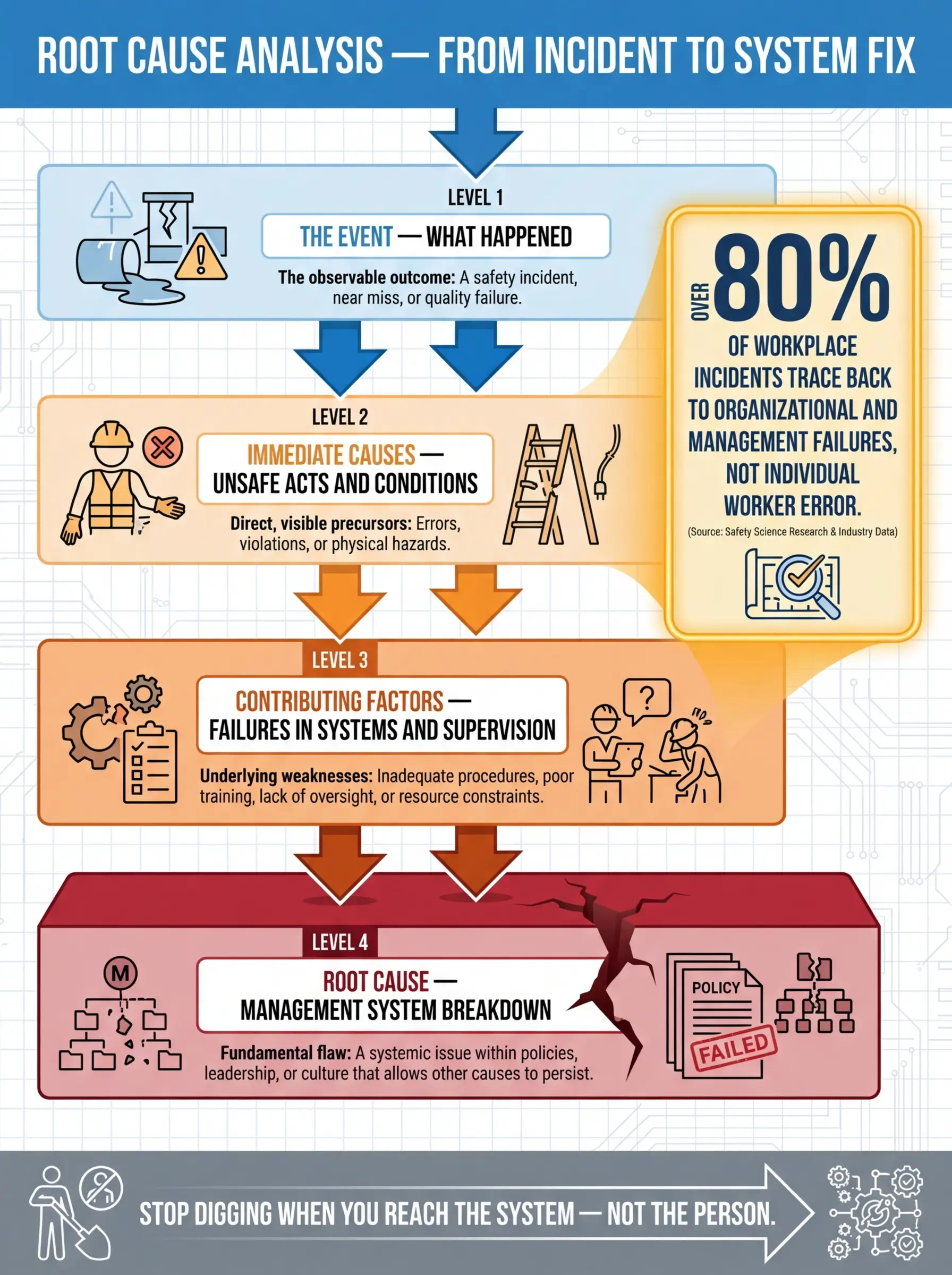

The distinction between an immediate cause and a root cause is critical, and it is where most investigation teams lose their way. Here is how the causal hierarchy breaks down in practice:

- The event is the observable outcome — the injury, the spill, the fire, the equipment failure. It is what triggers the investigation.

- Immediate causes are the unsafe acts or unsafe conditions directly preceding the event — the unsecured load, the missing guard, the energized equipment, the worker who bypassed a lockout.

- Contributing factors are the conditions that enabled or failed to prevent the immediate cause — inadequate supervision, missing pre-task risk assessment, poor lighting, time pressure from production schedules, fatigue from extended shifts.

- Root causes are the fundamental management system failures that allowed the contributing factors to persist uncorrected — absence of a competent person verification process, no management of change procedure for schedule modifications, failure to resource the safety department, a culture that normalizes shortcut-taking under production pressure.

OSHA’s investigation guidance (OSHA Publication 3895) defines root cause analysis as identifying “the basic or fundamental reason(s) that, if corrected, would prevent recurrence of the event.” The emphasis on recurrence prevention — not blame assignment — is the entire point of the methodology.

Pro Tip: If your corrective action list includes “retrain the worker” or “remind employees to follow procedures” as a standalone fix — you have not reached the root cause. Retraining addresses a symptom. The root cause is why the worker was not competent in the first place, why the procedure was not followed, or why the system did not catch the gap before the incident.

Why Root Cause Analysis Matters — The Cost of Stopping Too Early

I investigated a dropped object incident at a high-rise construction site in Southeast Asia where a 4-kilogram fitting fell twelve stories and missed a ground-level worker by less than a meter. The initial investigation concluded in two days: “Worker failed to secure tool lanyard. Corrective action: Toolbox talk on dropped object prevention.” Three months later, a nearly identical incident occurred on the same project — different worker, same floor zone, same type of unsecured fitting.

The repeat incident forced a deeper investigation. When we traced the causal chain properly, the root cause was not worker behavior. It was a procurement failure — the project had purchased tool lanyards rated for hand tools up to 2 kilograms, but routinely used fittings weighing 3–5 kilograms in that work zone. The lanyards physically could not secure the heavier items. No one had conducted a task-specific risk assessment matching lanyard capacity to actual tool weights. The purchasing specification had been copied from a previous project with different scope. The management system had no checkpoint linking procurement to task hazard analysis.

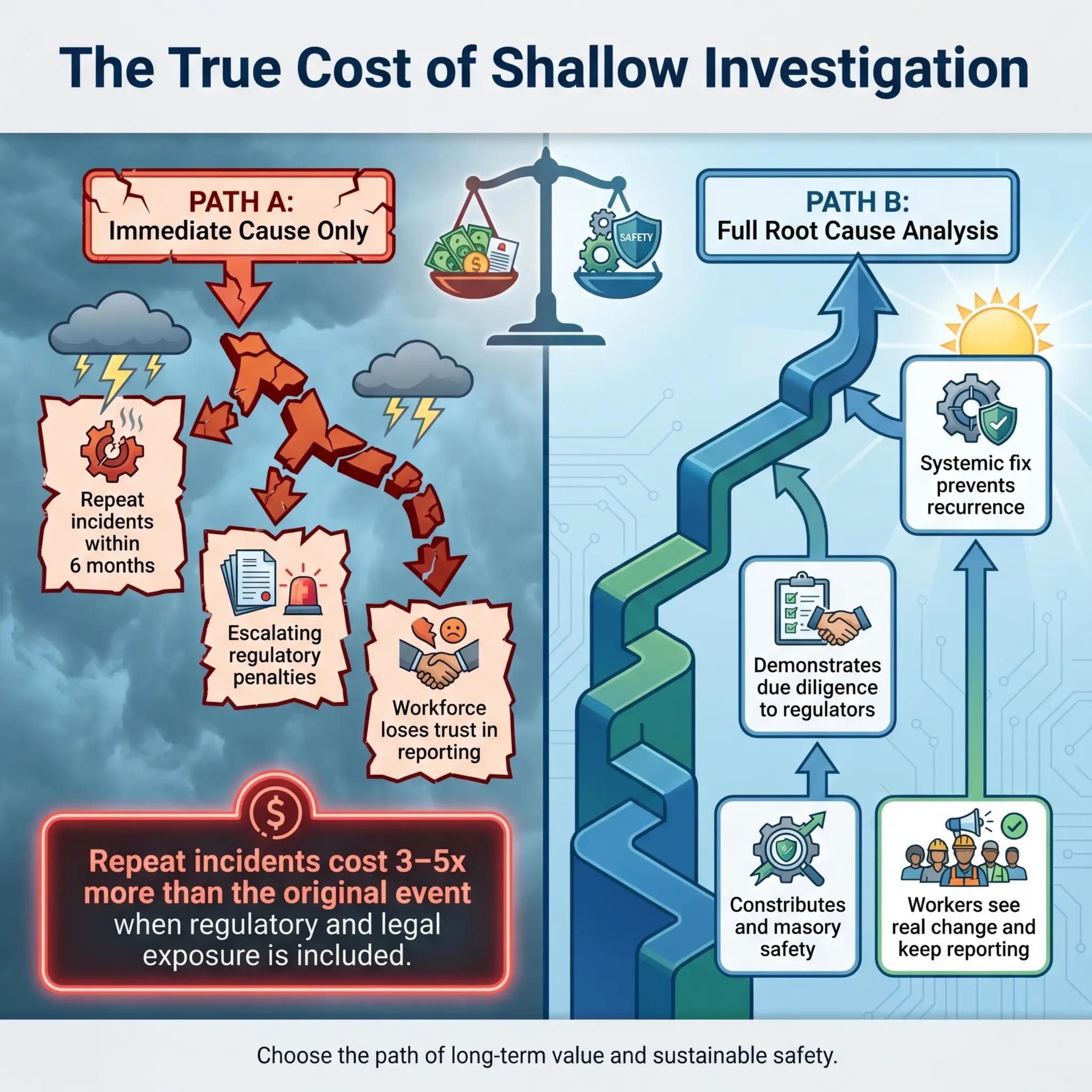

That is what shallow investigation costs. Here is what the data shows across industries:

- Incident recurrence rates are directly correlated with investigation depth. Organizations that consistently identify only immediate causes experience repeat incidents at rates 3–5 times higher than those conducting systematic RCA.

- Financial impact compounds with each recurrence — not just direct costs (medical, repair, downtime), but indirect costs including regulatory scrutiny, increased insurance premiums, litigation exposure, and workforce morale damage.

- Regulatory consequences escalate when repeat incidents reveal a pattern of inadequate corrective action. Both OSHA and the UK HSE treat recurrence as evidence of management failure, which can elevate enforcement from advisory notices to prohibition orders and significant fines.

- Worker trust erodes when people see the same hazards reappear after an investigation that was supposed to fix them. Once the workforce stops believing that reporting leads to real change, your near-miss reporting system collapses — and you lose your best early warning mechanism.

The Five Core Root Cause Analysis Methods Used in the Field

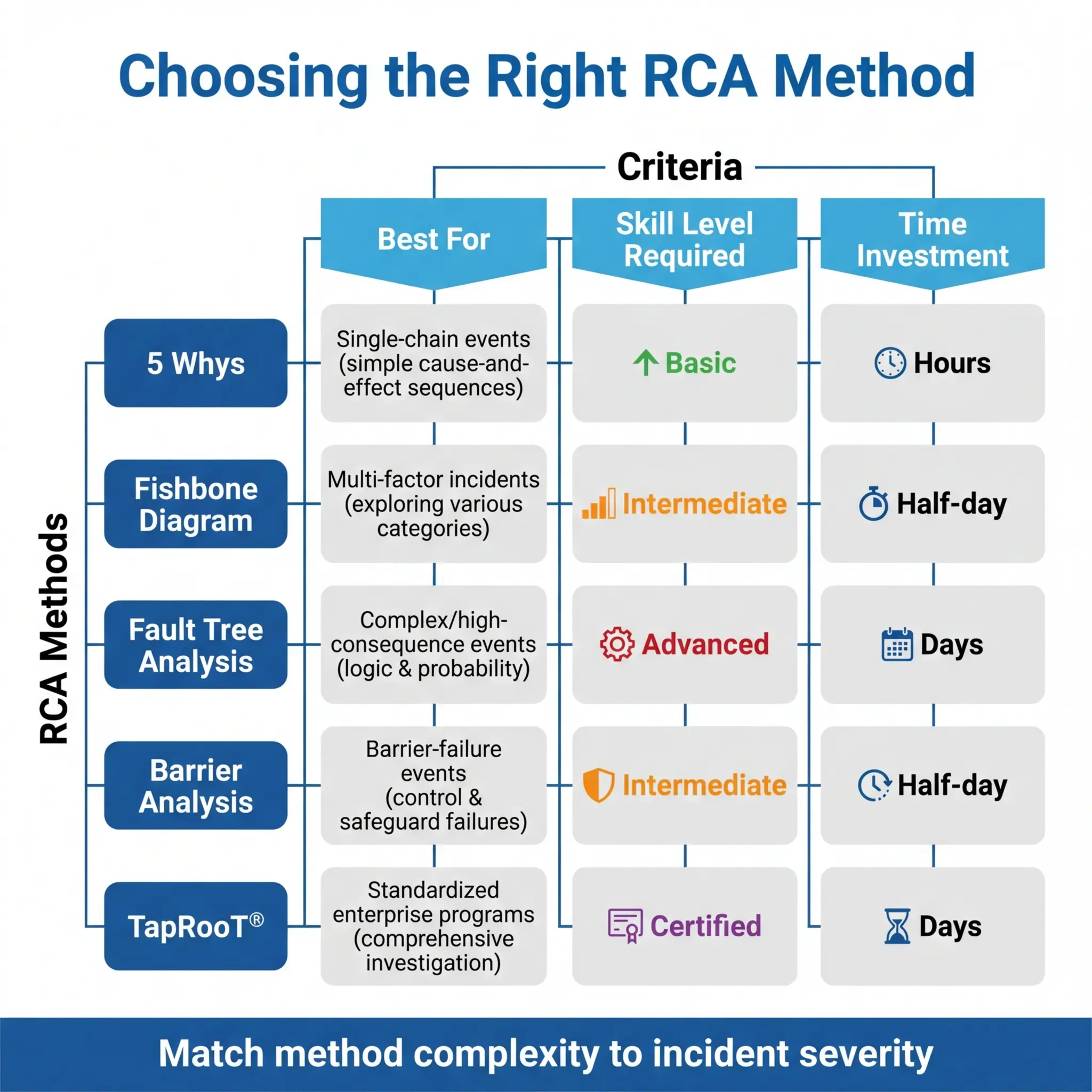

No single RCA method works for every incident. The right choice depends on the incident’s complexity, the investigation team’s skill level, the time and resources available, and the depth of systemic analysis required. Over years of conducting and reviewing investigations across construction, oil and gas, mining, and manufacturing, I have used all five of the following methods — and each has a specific sweet spot.

1. The 5 Whys Method

The 5 Whys is the simplest and most widely used RCA technique, and it is also the most frequently misapplied. The concept is straightforward: start with the event and ask “Why?” repeatedly — typically five times — until you reach a root cause that points to a system-level failure rather than an individual action.

The method works best for incidents with a single, relatively linear causal chain. It struggles with complex events involving multiple interacting causes. Here is a field example applied correctly:

- Why did the worker suffer a chemical burn on his forearm? → Because concentrated sodium hydroxide splashed onto unprotected skin.

- Why was his skin unprotected? → Because he was wearing short nitrile gloves that did not cover his forearms.

- Why was he wearing short gloves for a task involving caustic chemicals? → Because the job hazard analysis specified “chemical-resistant gloves” without specifying length or material rating for the specific chemical.

- Why did the JHA lack chemical-specific PPE requirements? → Because the JHA was a generic template copied from a different task and was never reviewed by someone with chemical hazard competency.

- Why was there no competency check on JHA content before it was issued for use? → Because the management system had no quality assurance step requiring technical review of JHAs before they entered the permit-to-work process.

The root cause is not the worker’s glove choice. The root cause is a management system gap — no quality assurance process for JHA content. The corrective action targets the system, not the individual.

Common mistakes teams make with the 5 Whys reveal why the method often fails to deliver real results:

- Stopping at “human error.” If your fifth “Why” ends at “because the worker did not follow the procedure,” you have not finished. Ask why they did not follow it — and you will find a system failure.

- Following only one causal branch. Many incidents have multiple “Why” paths. If you only follow one, you miss contributing factors that will cause the next incident.

- Accepting vague answers. Each “Why” answer must be specific, evidence-based, and verifiable — not speculative or general.

- Skipping the “so what” test. After reaching the final “Why,” test it: “If we fix this root cause, does it prevent the incident from recurring?” If the answer is uncertain, you have not gone deep enough.

Pro Tip: The number five is a guideline, not a rule. Some root causes emerge at the third “Why.” Some take seven or eight. Stop when you reach a management system failure that is within the organization’s power to fix — that is your root cause, regardless of the count.

2. Fishbone (Ishikawa) Diagram

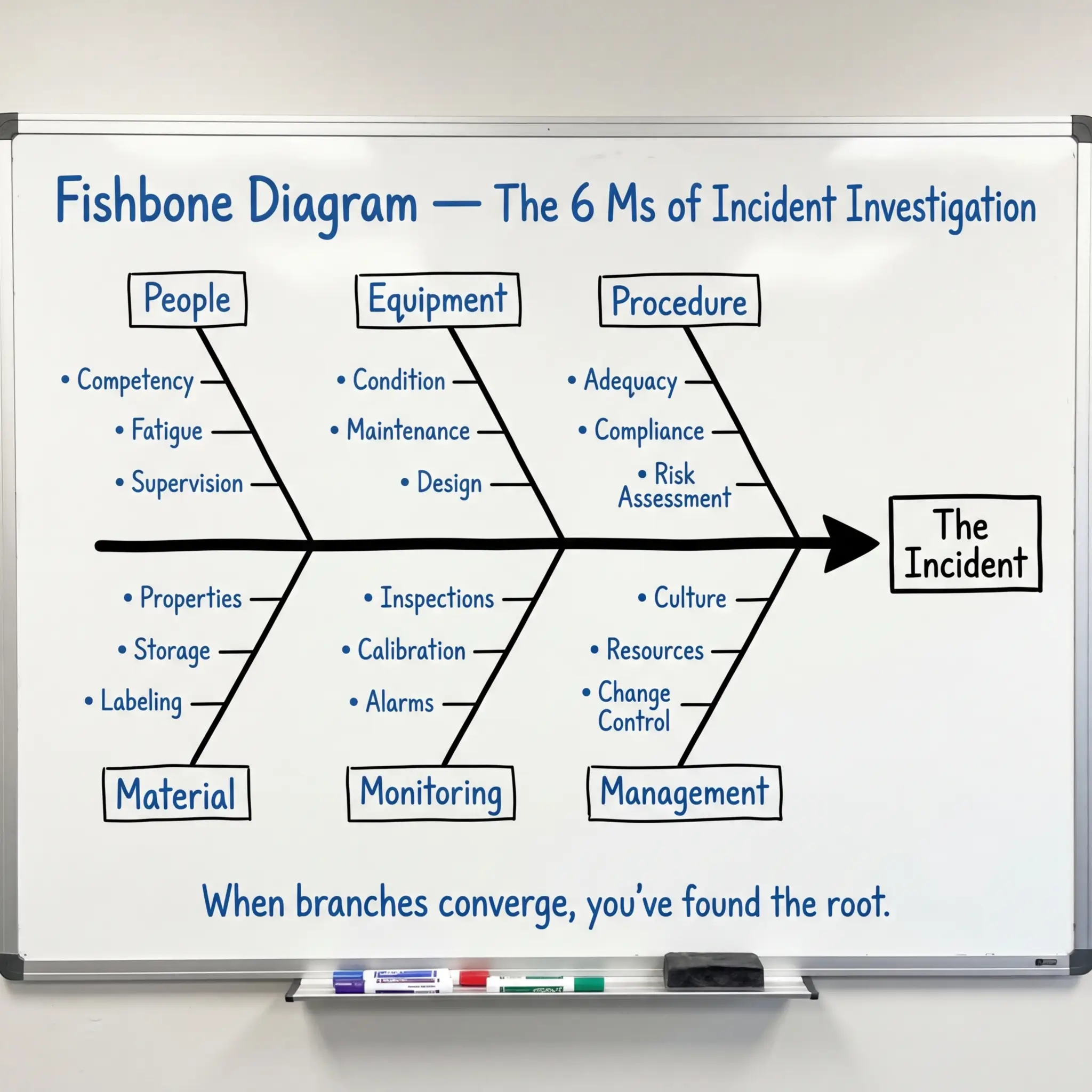

The Fishbone diagram is the method I recommend most often for investigation teams with mixed experience levels, because it provides visible structure that prevents the analysis from wandering. It organizes potential causes into predefined categories branching off a central spine that points to the event.

The standard categories used in HSE investigations — often remembered as the “6 Ms” — give the team a systematic framework for brainstorming:

- Man (People): Competency, training, fatigue, fitness for duty, supervision, communication, human factors

- Machine (Equipment): Condition, maintenance history, design adequacy, inspection status, guarding, calibration

- Method (Procedure): Existence of procedure, adequacy, accessibility, currency, compliance, risk assessment quality

- Material: Chemical properties, material condition, storage, labeling, compatibility, specification compliance

- Measurement (Monitoring): Inspection frequency, atmospheric monitoring, calibration, alarm set points, record keeping

- Management (Environment/System): Culture, resource allocation, change management, contractor oversight, audit findings, leadership commitment

The team populates each category branch with specific causal factors supported by evidence from the investigation. The completed diagram visually maps the causal landscape and reveals where multiple branches converge — those convergence points are almost always where root causes live.

I used a Fishbone analysis after a crane contact incident at a mining operation in Western Australia. The initial investigation pointed to the rigger misjudging the load swing radius. The Fishbone revealed contributing factors across five of the six categories — including a maintenance deficiency on the crane’s anti-two-block system, a lift plan that had not been updated after the ground conditions changed from the original survey, and a supervision gap where the appointed lift supervisor was simultaneously overseeing a second lift on the adjacent pad. No single “Why” chain would have caught all of those interacting causes.

3. Fault Tree Analysis (FTA)

Fault Tree Analysis is the most rigorous of the common RCA methods, and it is the one I default to for high-consequence events — fatalities, major environmental releases, or process safety incidents where regulatory reporting and potential litigation demand an airtight analytical trail.

FTA works top-down. You start with the undesired event (the “top event”) and systematically decompose it using Boolean logic gates — AND gates (all sub-events must occur for the parent event to happen) and OR gates (any single sub-event can independently cause the parent event). The result is a hierarchical tree that maps every possible combination of failures, errors, and conditions that could produce the top event.

The method is powerful because it forces precision in causal logic that narrative-based methods miss:

- AND gates reveal where multiple barriers must fail simultaneously — these are the scenarios where redundancy in your safety system is being tested.

- OR gates reveal single points of failure — these are the vulnerabilities where one broken barrier is enough to cause the event.

- Minimal cut sets — the smallest combinations of base events that can produce the top event — identify your highest-priority corrective action targets.

FTA requires more technical skill and time than the 5 Whys or Fishbone methods, which is why it is typically reserved for major incidents or process safety applications. But for any event involving multiple interacting barriers, simultaneous failures, or complex technical systems, nothing else provides the same level of analytical rigor.

IEC 61025 (Fault Tree Analysis) provides the international standard methodology for constructing and evaluating fault trees. In process safety contexts, FTA is a core component of the risk assessment framework under IEC 61511 (Safety Instrumented Systems) and supports the layers-of-protection analysis required by the standard.

4. Barrier Analysis

Barrier Analysis asks one deceptively simple question: what barriers existed between the hazard and the target (worker, environment, asset) — and why did each barrier fail? It is built on the concept that every incident represents a failure of one or more protective barriers that were supposed to prevent the hazard from reaching the target.

The method maps every barrier that should have been in place — engineered controls, administrative controls, PPE, supervision, alarms, procedures, permits — and evaluates each one against three criteria:

- Was the barrier present? Did it physically exist at the time of the event? A procedure that was written but not issued to the crew is absent.

- Was the barrier adequate? Was it designed and rated to control the specific hazard encountered? A fall protection anchor rated for restraint but used for arrest is inadequate.

- Did the barrier perform? Was it functioning correctly when needed? A gas detector with an expired calibration certificate has unknown performance.

I find Barrier Analysis particularly effective for incidents where “everything was in place” but the event happened anyway. During a confined space rescue drill that turned into an actual emergency at a wastewater treatment facility in Northern Europe, the Barrier Analysis revealed that four of the seven identified barriers were technically present but practically ineffective — the rescue tripod was positioned but not rigged, the standby rescuer was assigned but had not been trained on the specific vessel geometry, the atmospheric monitor was running but set to log-only mode instead of alarm mode, and the rescue plan existed on paper but had never been rehearsed for that specific space.

Every barrier was “there.” None of them worked. Barrier Analysis made that visible in a way that a narrative investigation report could not.

5. TapRooT® Methodology

TapRooT® is a proprietary but widely adopted systematic investigation methodology that combines structured causal analysis with a predefined root cause classification tree. It is used extensively in oil and gas, nuclear, pharmaceutical, and military operations where investigation consistency and defensibility are critical.

The methodology guides the investigator through a defined sequence that prevents the common pitfalls of less structured approaches:

- SnapCharT® — a cause-and-effect timeline that maps what happened in chronological order, identifying causal factors at each step

- Root Cause Tree® — a decision tree that classifies each causal factor into predefined categories (procedures, training, quality control, management systems, human engineering, communications, equipment) and guides the investigator to specific root cause subcategories

- Corrective Action Helper® — a database of proven corrective actions linked to each root cause category, ensuring that fixes are proportionate and evidence-based

The strength of TapRooT® is standardization. When multiple investigators across different sites and projects use the same methodology, the organization builds a comparable database of root causes over time. Patterns emerge — maybe 40% of your incidents trace back to “procedures not used or not followed” under the root cause tree, which tells you where to invest prevention resources at the system level.

The limitation is cost and training time. TapRooT® requires certified training, and the methodology is license-controlled. For organizations with mature safety management systems and high investigation volumes, the investment pays back through consistency and trending capability. For smaller operations, the 5 Whys or Fishbone may deliver sufficient analytical depth at lower cost.

How to Conduct a Root Cause Analysis — A Practical Field Process

Knowing the methods is only half the challenge. The other half is executing a disciplined investigation process that collects the right evidence, applies the method rigorously, and produces corrective actions that actually get implemented. The following sequence reflects what works consistently across industries — not a textbook process, but the one I have refined through dozens of investigations.

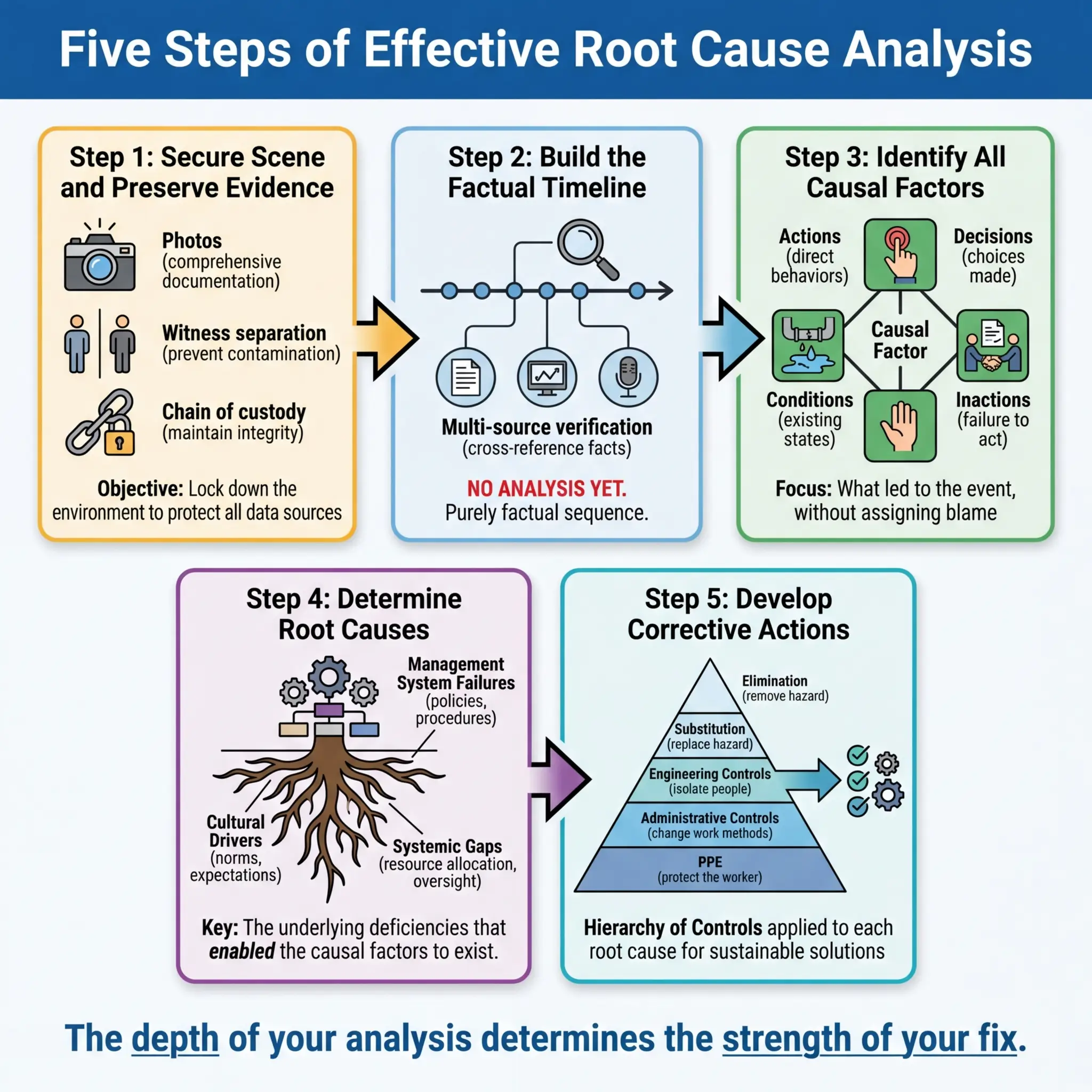

Step 1: Secure the Scene and Preserve Evidence

The first hours after an incident determine the quality of every analytical step that follows. Evidence degrades fast — conditions change, equipment gets moved, witnesses talk to each other and memories align rather than staying independent.

- Physically secure the area immediately. Barricade, lock out, and post a watch if necessary. Nobody enters except authorized investigators and emergency responders.

- Photograph everything before anything is touched — wide shots for context, medium shots for equipment positions, close-ups for details like gauge readings, valve positions, PPE condition, and signage. Capture 360-degree coverage.

- Preserve physical evidence — damaged equipment, failed components, PPE, tools, chemical containers, atmospheric monitoring logs, permit documents. Tag and secure each item with chain-of-custody documentation.

- Separate witnesses before taking statements. Independent recollections are investigation gold. Contaminated recollections are noise.

- Document environmental conditions — weather, lighting, temperature, noise levels, time of shift, production status — anything that establishes the operational context at the time of the event.

Step 2: Establish the Timeline

Before applying any RCA method, you need a factual, evidence-based timeline of what happened, in what order, involving whom, and under what conditions. This step is pure fact-gathering — no analysis, no blame, no conclusions.

Build the timeline from multiple independent sources to cross-verify accuracy:

- Witness statements — taken individually, using open-ended questions (“Tell me what you saw, heard, and did, starting from when you arrived at the work area”).

- Physical evidence — equipment positions, damage patterns, chemical residue locations, PPE condition.

- Electronic records — CCTV footage, access logs, SCADA data, alarm histories, atmospheric monitoring downloads, radio communication logs.

- Documents — permits, JHAs, toolbox talk records, inspection logs, maintenance records, training records, shift handover notes.

Pro Tip: The timeline should include what did not happen as much as what did. If the pre-task risk assessment was not conducted, if the atmospheric test was not performed, if the supervisor was not present — these absences are causal factors. An investigation that only maps actions and misses omissions will never reach the root cause.

Step 3: Identify Causal Factors

With the timeline established, identify every causal factor — every action, inaction, condition, or decision that contributed to the event. A causal factor is anything that, if it had been different, would have prevented the event or reduced its severity.

This is where you select and apply your RCA method (5 Whys, Fishbone, FTA, Barrier Analysis, or TapRooT®) to the evidence. The method structures your analysis; the evidence drives your conclusions. Common causal factor categories that surface repeatedly across HSE investigations include:

- Inadequate hazard identification — the risk was not recognized before work began

- Insufficient or absent procedures — no written method, or the method did not address the actual conditions encountered

- Competency gaps — workers or supervisors lacked the knowledge, skill, or experience for the specific task and hazard

- Supervision failures — inadequate presence, authority, or engagement of the responsible supervisor

- Equipment deficiency — wrong equipment for the task, poor maintenance, missed inspection, design inadequacy

- Communication breakdown — between shifts, between trades, between management and field, between contractor and client

- Management system gaps — no management of change process, no audit follow-up, inadequate resourcing, conflicting production and safety priorities

Step 4: Determine Root Causes

This is the critical analytical step where most investigations either succeed or fail. The question is not “what went wrong?” — you already know that from the timeline and causal factors. The question is “why did the system allow this to happen?”

Root causes live at the management system level. They are organizational decisions, policies, processes, or cultural norms that created the conditions for the incident. Test every proposed root cause against these three criteria:

- Would fixing this cause prevent recurrence of this specific event? If not, it is a contributing factor, not a root cause.

- Would fixing this cause prevent similar events across other operations? If yes, you have found a systemic root cause with broad corrective value.

- Is this within the organization’s control to fix? Root causes must be actionable. “The weather was bad” is a condition, not a root cause. “The management system had no adverse weather work suspension criteria” is a root cause.

| Test Question | If Yes | If No |

|---|---|---|

| Does fixing it prevent recurrence of this event? | Likely a root cause | Contributing factor only |

| Does fixing it prevent similar events elsewhere? | Systemic root cause — high-value fix | May be event-specific |

| Is it within organizational control? | Actionable root cause | External factor — document but address what is controllable |

| Does it point to a management system element? | True root cause | May need deeper analysis |

Step 5: Develop and Implement Corrective Actions

The entire value of root cause analysis collapses if the corrective actions are weak, vague, or unimplemented. Every corrective action must be specific enough to assign, resource, and verify — and must target the root cause, not just the immediate cause.

Effective corrective actions follow the hierarchy of controls — a principle that applies to corrective action design just as it applies to hazard control:

- Elimination — remove the root cause entirely (e.g., eliminate the need for confined space entry through process redesign)

- Substitution — replace the system, process, or material that created the vulnerability (e.g., replace a manual isolation procedure with an automated interlock)

- Engineering controls — design physical barriers or safeguards into the system (e.g., install a fixed gas detection system with automatic alarm and shutdown)

- Administrative controls — strengthen procedures, training, supervision, and audit processes (e.g., implement a mandatory JHA quality review step by a competent person before permit issuance)

- PPE — upgrade or specify personal protective equipment as a last line of defense (e.g., specify chemical-specific glove selection with length and breakthrough time requirements)

The strongest corrective action packages combine controls from multiple levels. An investigation that produces only administrative controls and PPE changes — without considering whether elimination, substitution, or engineering solutions are feasible — has not applied the hierarchy properly.

A Complete Root Cause Analysis Example — Worked Through

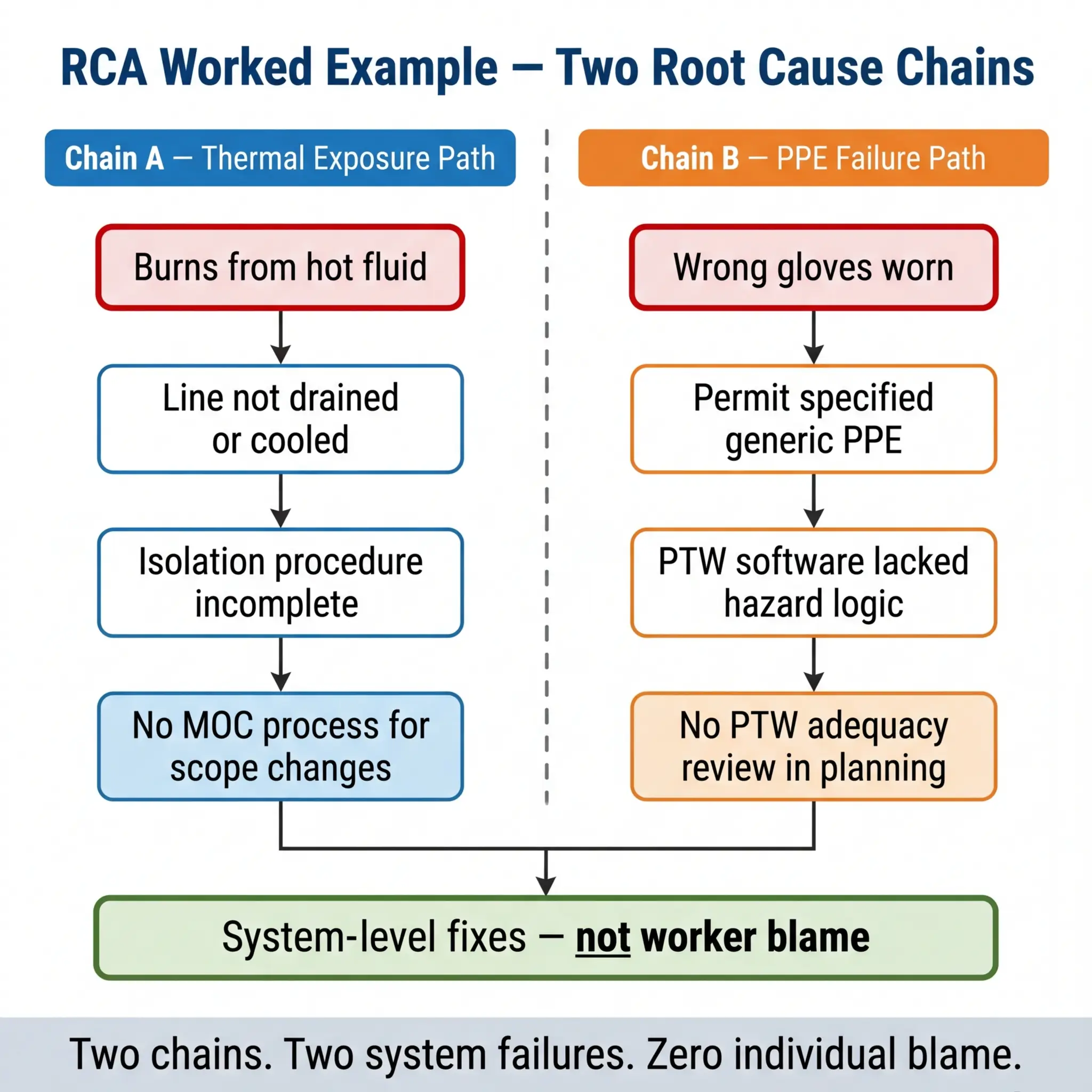

Theory is useful. Seeing a full RCA applied to a real-type scenario is what makes it stick. The following example is composited from multiple actual investigations I have participated in, with details modified for confidentiality. The analytical method and findings are authentic.

The Incident

During a scheduled maintenance turnaround at a petrochemical facility, a pipefitter suffered second-degree burns to his hands and forearms when residual hot process fluid discharged from a flanged joint he was breaking. The fluid was a heat transfer oil at approximately 180°C. The worker was wearing standard leather work gloves — not thermal-rated chemical gloves. He required hospital treatment and was off work for six weeks.

The 5 Whys Application

The investigation team applied the 5 Whys method, running two parallel causal chains because the initial analysis revealed two independent contributing paths:

Chain A — The thermal exposure:

- Why was the worker burned? → Hot process fluid contacted unprotected skin.

- Why was hot fluid present in the line? → The line had not been fully drained and cooled before the work permit was issued.

- Why was the line not drained and cooled? → The isolation authority confirmed “isolated” but did not verify “drained and cooled” as a separate condition.

- Why did the isolation authority not verify drain/cool status? → The isolation procedure did not include drain and cool verification as a mandatory checklist item — it only required energy isolation confirmation.

- Why did the isolation procedure lack drain/cool verification? → The procedure had been written for ambient-temperature systems and was never updated through management of change when hot process lines were added to the turnaround scope.

Root cause (Chain A): Absence of a management of change (MOC) process to update isolation procedures when turnaround scope expanded to include hot process systems.

Chain B — The PPE failure:

- Why was the worker wearing standard leather gloves? → The work permit specified “leather work gloves” as required PPE.

- Why did the permit specify inadequate gloves? → The permit writer selected PPE from a generic drop-down list that did not differentiate by thermal hazard.

- Why did the PPE selection system lack thermal hazard differentiation? → The permit-to-work software had not been updated to include task-specific PPE logic linked to process conditions.

- Why had the software not been updated? → No one had conducted a gap analysis of the PTW system against the specific hazards present in the expanded turnaround scope.

- Why was no gap analysis conducted? → The turnaround planning process did not include a PTW system adequacy review as a planning milestone.

Root cause (Chain B): Turnaround planning process lacked a mandatory PTW system adequacy review step before work packages were issued.

Corrective Actions Developed

The corrective actions targeted both root causes at the system level, not the individual level. No one was “retrained on glove selection.” Instead, the following system changes were implemented:

- MOC procedure updated to require scope-change review of all affected procedures — including isolation procedures — whenever turnaround scope is modified after initial planning

- PTW software upgraded to require hazard-specific PPE selection logic linked to process conditions (temperature, pressure, chemical identity) rather than generic drop-down lists

- Turnaround planning procedure amended to include a mandatory PTW system adequacy review milestone, signed off by the HSE lead and the turnaround manager, before work packages are approved for issue

- Isolation procedure rewritten to include drain, purge, and cool verification as separate mandatory checklist items independent of energy isolation confirmation

Not one of these corrective actions mentions the injured worker by name. Not one says “retrain.” Every one changes the system that failed.

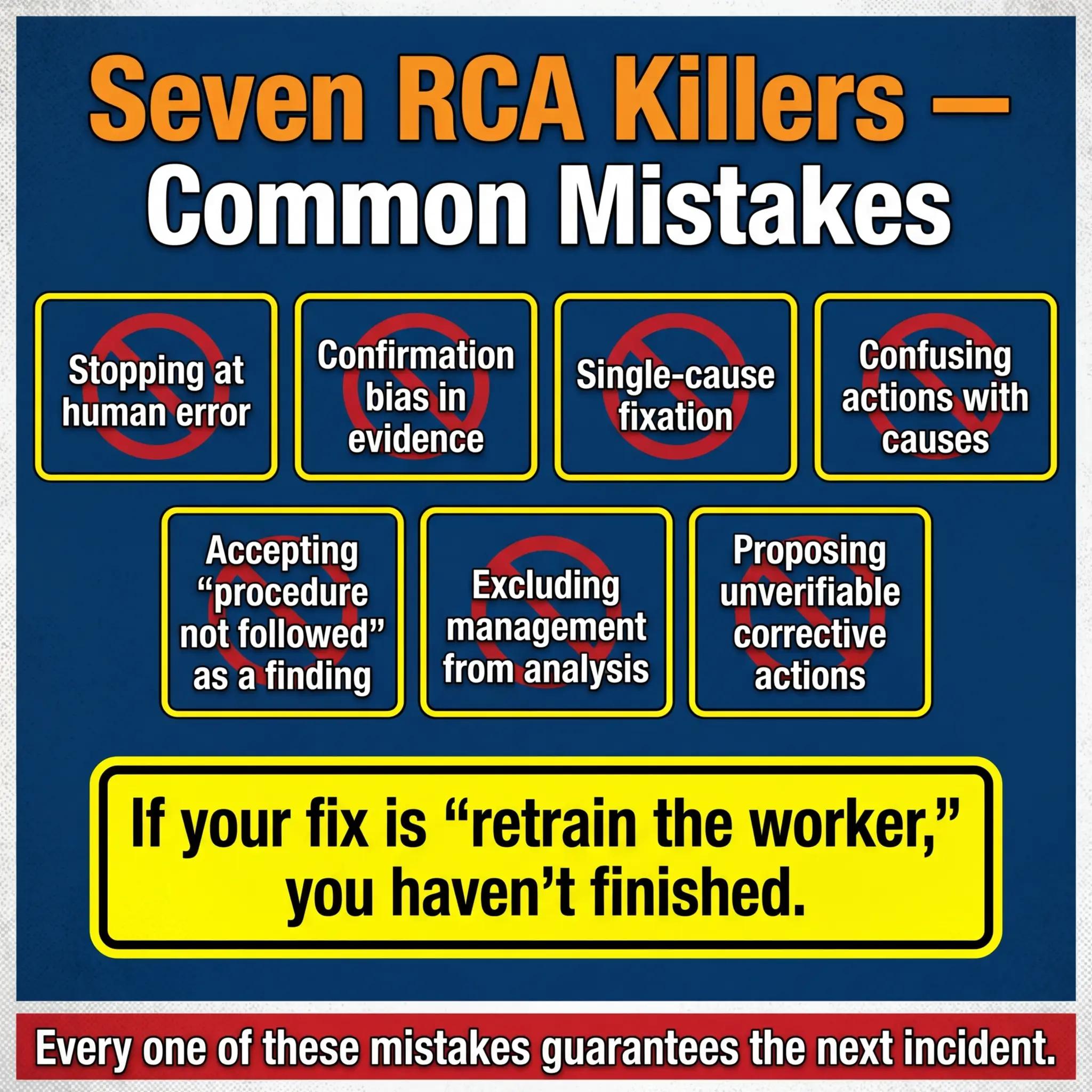

Common Mistakes That Destroy Root Cause Analysis Quality

I have reviewed hundreds of investigation reports across industries, and the same analytical errors appear so frequently that they almost qualify as their own hazard category. Recognizing these mistakes is as important as knowing the methods, because a flawed RCA is worse than no RCA — it creates a false sense that the problem has been solved.

The following mistakes account for the vast majority of RCA failures I encounter during audits and management system reviews:

- Stopping at human error. “The worker made a mistake” is never a root cause. It is the starting point. Every human error has a system context — inadequate training, confusing procedure, poor workplace design, fatigue from scheduling decisions, production pressure from management priorities. The question is always why the system allowed or encouraged the error.

- Confirmation bias in evidence collection. Investigation teams often form a hypothesis within the first hour and then unconsciously collect evidence that supports it while ignoring contradictory data. Disciplined RCA requires collecting all evidence before forming any conclusions.

- Single-cause fixation. Most incidents have multiple interacting causes. An investigation that identifies one root cause and stops has almost certainly missed others that will produce the next incident.

- Confusing corrective actions with root causes. “Lack of training” is not a root cause — it is a missing barrier. The root cause is why training was not provided, not adequate, or not verified. Was there no training needs analysis? No competency verification process? No budget allocation?

- Accepting “the procedure was not followed” as a finding. This is the single most common dead-end in RCA. If the procedure was not followed, ask why — was it accessible? Was it current? Was it practical for the actual field conditions? Did the worker know it existed? Was there a supervision mechanism to verify compliance? Was there production pressure to skip it?

- Excluding management from the causal analysis. Many investigation teams are culturally unable or organizationally unwilling to trace causes up to management decisions. But resource allocation, scheduling, staffing levels, contractor selection, safety budget, and cultural tone are all management responsibilities — and they are frequently where root causes live.

- Proposing corrective actions that cannot be verified. “Improve safety culture” is not a corrective action. It cannot be assigned to a person, completed by a date, or verified as effective. Every corrective action must be specific, measurable, assignable, and verifiable.

HSE UK’s guidance on investigating incidents (HSG245) explicitly warns against “blame-oriented investigations” and emphasizes that “the immediate cause is rarely the root cause.” The guidance directs investigators to examine management arrangements, organizational factors, and the overall safety management system — not just frontline actions.

Pro Tip: After completing your RCA, apply the “recurrence test” to every corrective action: If we implement this action and change nothing else, will this specific incident still be able to recur? If the answer is yes — the action addresses a symptom, not the root cause. Go deeper.

Root Cause Analysis and Near-Miss Investigations

There is a persistent misunderstanding across many organizations that RCA is reserved for serious incidents — injuries requiring medical treatment, significant environmental releases, major equipment damage. Near-misses, the thinking goes, get a quick report and a toolbox talk. That approach throws away the most valuable safety data your operation produces.

A near-miss has exactly the same causal chain as the incident it nearly became. The hazard was present. The barriers failed. The exposure occurred. The only difference is outcome — the worker happened to step left instead of right, the wind happened to push the vapor cloud away from the ignition source, the falling object happened to land where nobody was standing. Outcome is a matter of chance. Causal chains are a matter of management.

I learned this lesson definitively during a period on a large EPC project where we tracked near-miss RCA data against actual incident RCA data over eighteen months. The causal factor profiles were nearly identical — the same management system gaps, the same supervision failures, the same procedural inadequacies appeared in both data sets. The near-misses were predicting the incidents. When we started applying the same RCA rigor to near-misses that we applied to recordable injuries, our incident rate dropped by over 40% within a year — because we were fixing root causes before they produced injuries, not after.

Organizations that want to shift from reactive to proactive safety management must treat near-miss RCA as a core operational discipline:

- Apply a formal RCA method (5 Whys minimum, Fishbone for complex near-misses) to every high-potential near-miss — defined as any event where a slight change in timing, position, or conditions would have resulted in a serious injury or fatality.

- Track near-miss root causes in the same database as incident root causes. Trending this combined data reveals systemic patterns that pure incident data — which is low-frequency by nature — cannot show.

- Close the loop visibly. Workers who report near-misses need to see the investigation result and the corrective action. If reporting disappears into a black hole, the reporting stops. And when reporting stops, the next near-miss becomes the next fatality.

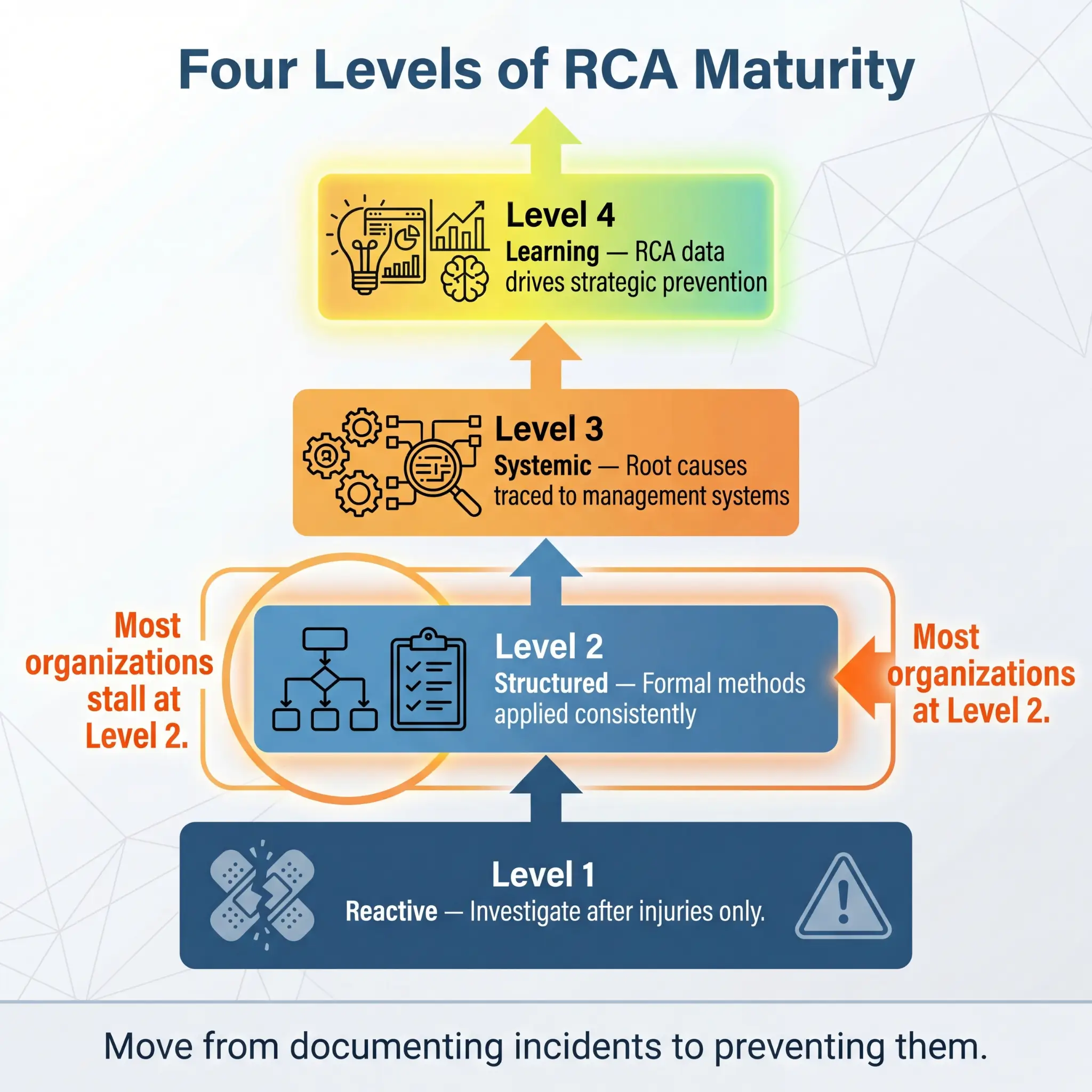

Building an Organizational RCA Capability

Root cause analysis does not work as an isolated investigation technique. It works when it is embedded in the organization’s safety management system as a repeatable, resourced, and accountable process. Too many organizations treat RCA as something the safety department does after a bad day. Effective organizations build it into the operational rhythm.

The following elements are what distinguish organizations that genuinely learn from incidents from those that just document them:

- Trained investigation teams — not just safety staff, but operational supervisors, maintenance leads, and engineers who understand how work actually happens on site. Investigation skill is a competency that requires training and practice, not just good intentions.

- Clear investigation triggers — a defined matrix specifying which incident types and severity levels require which level of RCA. A first-aid case may warrant a 5 Whys. A lost-time injury gets a Fishbone or Barrier Analysis. A fatality or high-potential event gets a full Fault Tree or TapRooT® investigation.

- Protected investigation time and resources — investigation teams must have the authority and time to conduct thorough analysis without production pressure to “wrap it up quickly.” Rushed investigations produce shallow root causes.

- Management review and accountability — corrective actions must be reviewed, approved, and tracked by senior management. If the root cause points to a management system failure, management must own the fix — not delegate it back to the safety department.

- Effectiveness verification — every corrective action must be verified for implementation AND effectiveness. Implemented does not mean effective. A revised procedure that nobody has been trained on is implemented but not effective. A new engineering control that has not been tested under actual operating conditions is installed but not verified.

| RCA Maturity Level | Characteristics | Typical Outcome |

|---|---|---|

| Reactive | RCA only after serious injuries. Focused on immediate causes. Corrective actions are retraining and reminders. | Repeat incidents. Regulatory citations. Eroding workforce trust. |

| Structured | Formal RCA methods applied. Investigation triggers defined. Causal factors identified. | Incident reduction. Better compliance. Inconsistent depth. |

| Systemic | Root causes traced to management systems. Near-misses analyzed with same rigor. Corrective actions target systems. | Sustained improvement. Proactive hazard identification. Strong safety culture. |

| Learning | RCA data trended across operations. Patterns drive strategic prevention. Safety intelligence informs business decisions. | Industry-leading performance. Continuous improvement embedded in operations. |

Conclusion

Root cause analysis is not an investigation technique reserved for safety professionals with specialized certifications. It is a fundamental operational discipline — the mechanism through which organizations convert painful events into permanent prevention. Every incident carries information. RCA is the process that extracts it. And the quality of what you extract determines whether the next shift is safer than the last one.

The methods I have outlined — the 5 Whys, Fishbone, Fault Tree Analysis, Barrier Analysis, and TapRooT® — are tools. They work when they are applied with discipline, supported by evidence, and driven by a genuine commitment to finding the truth rather than finding someone to blame. The corrective actions they produce are only as strong as the analytical depth behind them. A shallow analysis produces a shallow fix. A deep analysis produces a system-level change that protects every worker on every shift going forward.

If I could leave one principle from a decade of investigating incidents across refineries, mine sites, construction projects, and manufacturing plants, it would be this: the root cause is never the person. The root cause is always the system that put that person in a position where failure was possible, probable, or inevitable. Fix the system. Protect the people. That is what root cause analysis exists to do — and it is the only investigation outcome that matters.