How many safeguards does it actually take to bring a high-consequence scenario down to tolerable risk? That question comes up in every HAZOP follow-up meeting I’ve ever sat through — and the honest answer is that gut feeling and group consensus are terrible substitutes for a structured method. In a batch reactor facility handling exothermic solvent-based reactions, a single overpressure scenario can involve a basic process control system, a high-pressure alarm with operator response, a safety instrumented system, and a rupture disc — four apparent layers of defense. Whether those four layers genuinely reduce risk enough, or whether two of them share a common failure mode that collapses them into one, is precisely the question Layers of Protection Analysis is designed to answer.

LOPA gives process safety teams a disciplined, repeatable way to evaluate risk scenario by scenario, without the resource intensity of a full Quantitative Risk Assessment. It strips away subjective debate and replaces it with order-of-magnitude calculations that either confirm your safeguards are adequate or expose exactly how much additional risk reduction you need. This guide walks through every element a beginner needs: what LOPA is, how it differs from HAZOP and QRA, what qualifies as an Independent Protection Layer, how a basic study works step by step, a worked example, and the standards that underpin the method. If you’ve completed your first HAZOP and someone just told you “we need to LOPA those scenarios,” this is where to start.

What Is Layers of Protection Analysis (LOPA)?

Layers of Protection Analysis is a semi-quantitative risk assessment method that evaluates whether existing safeguards reduce a specific cause-consequence scenario to a tolerable risk level. Unlike broad hazard identification techniques, LOPA zooms in on one initiating cause linked to one defined consequence and asks a focused question: do the protection layers between that cause and that consequence provide enough risk reduction?

The method works with order-of-magnitude estimates rather than precise probabilistic data. You assign a frequency to the initiating event, credit each qualifying protection layer for the risk reduction it provides, apply any relevant conditional modifiers, and compare the resulting mitigated event frequency against your organization’s risk tolerance criteria. If the number falls below the threshold, the existing protections are sufficient. If it doesn’t, you know exactly how much additional risk reduction is required.

Three characteristics define LOPA and separate it from other methods:

- Scenario-specific evaluation: Each LOPA examines a single initiating event leading to a single consequence — not an entire process unit or plant-wide hazard register.

- Order-of-magnitude precision: Frequencies and failure probabilities are expressed as powers of ten (10⁻¹, 10⁻², 10⁻³), avoiding false precision while maintaining analytical rigor.

- Decision-oriented output: The result is a clear yes-or-no comparison against risk tolerance, plus a quantified gap if more protection is needed.

The canonical reference for the method is the CCPS Layer of Protection Analysis book, published by the Center for Chemical Process Safety, which formalized the framework that most practitioners use today.

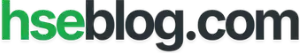

Where LOPA Fits Between HAZOP, PHA, and QRA

Every time I brief a new engineer on our risk assessment workflow, the first confusion is where LOPA sits relative to the tools they already know. HAZOP identifies deviations and their consequences. LOPA doesn’t replace that — it picks up specific high-consequence scenarios that HAZOP flagged and subjects them to a deeper, structured evaluation. At the other end of the spectrum, a full Quantitative Risk Assessment models consequence severity, dispersion, individual risk contours, and societal risk curves in fine detail. LOPA occupies the practical middle ground.

| Aspect | HAZOP / PHA | LOPA | QRA |

|---|---|---|---|

| Purpose | Identify hazards and deviations | Test safeguard sufficiency for specific scenarios | Full probabilistic risk quantification |

| Precision | Qualitative (risk ranking) | Semi-quantitative (order of magnitude) | Quantitative (detailed probability) |

| Scope per study | Entire process or system | One cause–one consequence at a time | Multiple scenarios, full consequence modeling |

| Resource intensity | Moderate | Low to moderate | High |

| Typical output | Hazard register, recommendations | Risk gap, SIL target, go/no-go | Risk contours, F-N curves, cost-benefit |

LOPA vs HAZOP in Simple Terms

HAZOP asks what can go wrong? LOPA asks are the protections enough for what can go wrong? A HAZOP might identify that loss of cooling on a batch reactor could lead to thermal runaway. LOPA takes that specific scenario — loss of cooling as the initiating event, thermal runaway with vessel rupture as the consequence — and calculates whether the high-temperature alarm, the safety instrumented function that triggers emergency cooling, and the rupture disc collectively reduce the event frequency below the company’s tolerable risk threshold.

LOPA vs QRA

The practical distinction is proportionality. A colleague at a large-scale continuous processing facility once described it this way: “LOPA tells you whether you need another lock on the door. QRA tells you the exact probability that someone picks every lock, climbs through the window, and steals the safe — and how far the blast radius extends.” For most process safety decisions — SIL selection, design change justification, revalidation screening — LOPA provides the defensible answer without the months of dispersion modeling and consequence analysis that QRA demands.

Why LOPA Matters in Process Safety

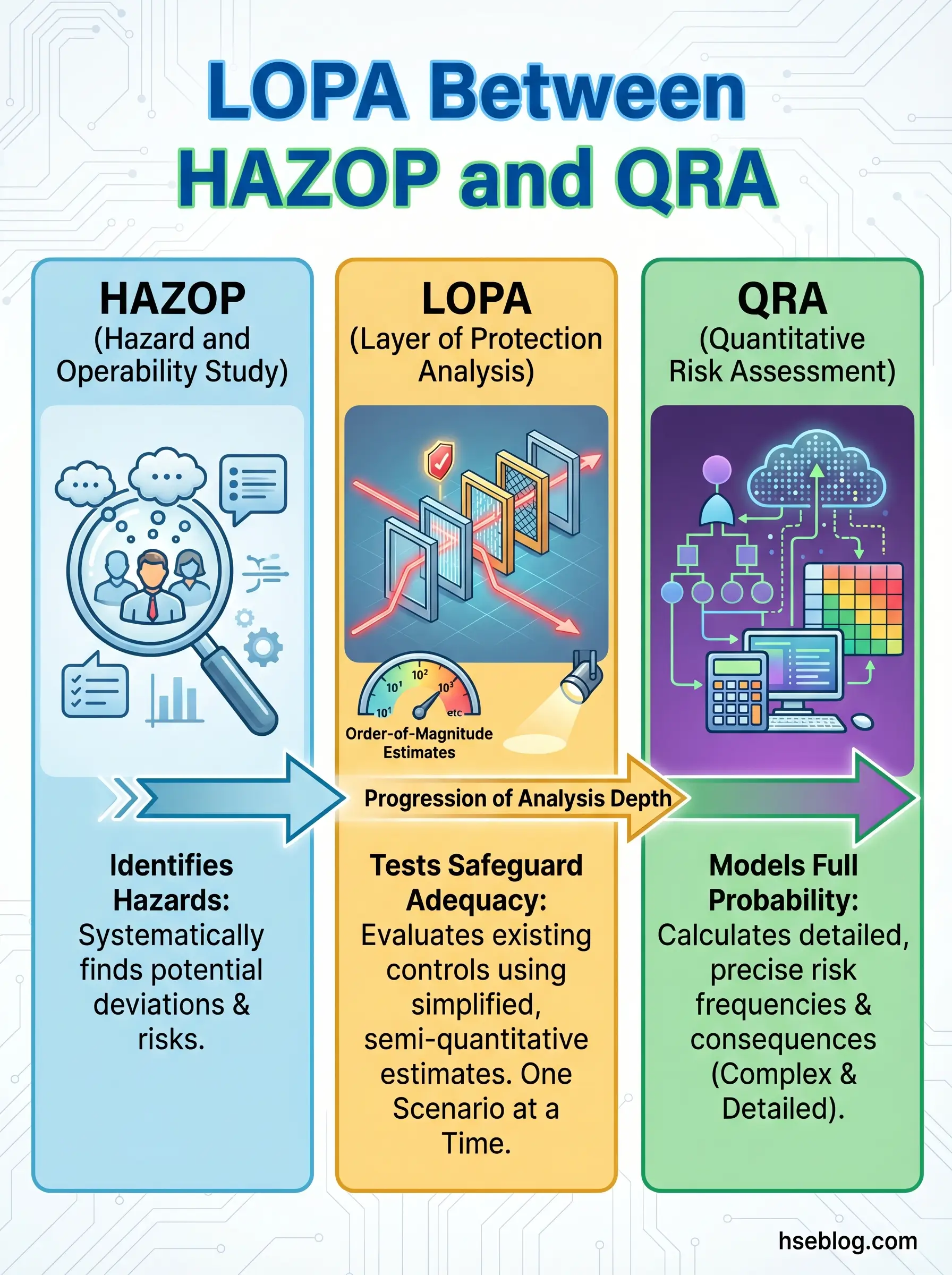

Under OSHA 29 CFR 1910.119(e)(3), Process Hazard Analyses must address safeguards, consequences of safeguard failure, facility siting, and human factors. LOPA directly serves this obligation by forcing teams to evaluate each safeguard’s actual contribution to risk reduction rather than listing safeguards and assuming they’re adequate.

Beyond compliance, LOPA solves three persistent problems in process safety decision-making:

- Eliminates subjective risk ranking debates: Instead of arguing whether a scenario is “medium” or “high” risk, the team calculates a mitigated event frequency and compares it against an agreed criterion. The number settles the argument.

- Prevents both under-protection and overdesign: I’ve seen teams add a third safety instrumented function to a reactor system because “more layers must be safer” — without recognizing that the existing SIF and the mechanical relief device already brought the scenario below tolerable risk. LOPA exposes where additional investment genuinely matters and where it’s redundant spending.

- Supports SIL selection for safety instrumented systems: When a LOPA identifies a risk gap that requires a Safety Instrumented Function, the size of that gap directly informs the SIL target — SIL 1, SIL 2, or SIL 3 — under the IEC 61511 functional safety lifecycle.

What Counts as a Protection Layer in LOPA?

A batch reactor producing specialty intermediates typically has multiple lines of defense between a process upset and a catastrophic outcome. Not all of them count equally in a LOPA. Understanding which layers exist — and which ones actually earn credit — is the foundation of the entire method.

Protection layers in process safety generally fall along a spectrum from inherently safe design features through to emergency response. The table below shows typical layers encountered in process facilities, ordered from closest to the process to furthest from the consequence:

| Layer | Example | Type |

|---|---|---|

| Inherent safety / process design | Reduced inventory, less hazardous solvent | Preventive |

| Basic Process Control System (BPCS) | Temperature control loop maintaining setpoint | Preventive |

| Critical alarm + operator response | High-temperature alarm with defined operator action | Preventive |

| Safety Instrumented System (SIS) | Emergency shutdown triggered by high-high pressure | Preventive |

| Physical protection | Rupture disc, pressure relief valve | Mitigative |

| Containment | Dike, secondary containment, blast wall | Mitigative |

| Fire & gas detection + emergency response | Gas detectors triggering deluge, plant emergency plan | Mitigative |

Each of these layers can reduce risk — but whether a specific layer qualifies as an Independent Protection Layer for LOPA credit is a separate, stricter question.

Typical Process Protection Layers

Process design features — such as selecting a solvent with a higher boiling point or reducing vessel inventory — sit at the innermost layer and prevent the hazardous scenario from developing in the first place. The BPCS manages normal operations and catches routine deviations. Alarms alert operators to abnormal conditions, but only earn LOPA credit when paired with a defined, practiced operator response within a credible time window. Safety instrumented systems operate independently from the BPCS and execute predefined safety functions. Relief devices and containment mitigate consequences after the event initiates.

What Is an Independent Protection Layer (IPL)?

“So if my site has six safeguards listed against this scenario, does that mean I have six IPLs?” A junior engineer asked me that during her first LOPA session, and the answer reshaped her understanding of the entire method. Not every safeguard earns IPL credit. An IPL is a device, system, or action that meets four strict qualification criteria — and if it fails any one of them, it doesn’t count.

An Independent Protection Layer must be:

- Independent: It cannot share a common cause of failure with the initiating event or with any other IPL credited in the same scenario. If the BPCS failure is the initiating event, the BPCS control loop cannot also be credited as an IPL.

- Effective (Specific): It must be capable of preventing the consequence by itself, regardless of what other layers do. A general housekeeping program is a good practice but cannot prevent a specific overpressure event.

- Dependable: Its probability of failure on demand (PFD) must be quantifiable and low enough to claim meaningful risk reduction — typically a PFD of 0.1 or better for most IPLs. This means it must be tested, maintained, and proven to work at that reliability.

- Auditable: Evidence must exist that the IPL is maintained and tested at the frequency needed to sustain its claimed PFD. If you can’t show test records, you can’t claim the credit.

Field Test: Before crediting any safeguard as an IPL, ask three questions: Can it fail for the same reason the scenario starts? Does it actually prevent this specific consequence? Can I show an auditor the test records? If any answer is no, it’s a safeguard — not an IPL.

Safeguard vs IPL

All IPLs are safeguards. Not all safeguards are IPLs. This distinction trips up more first-time LOPA teams than any other concept. A site might list “operator training,” “safety signage,” “weekly supervisor rounds,” and “standard operating procedure” as safeguards against a particular hazard. None of these meet the independence, specificity, and dependability criteria required for IPL credit. They reduce risk in a general sense, but LOPA demands quantifiable, testable, scenario-specific layers.

Examples of What Is Not an IPL

Certain safeguards commonly appear on HAZOP worksheets but routinely fail IPL qualification:

- Community emergency response or external fire brigade: Response time is too variable and too far removed from the initiating event to claim a specific PFD.

- A second alarm sharing the same sensor or logic solver as the first: Common-cause failure eliminates independence. If the shared sensor fails, both alarms fail.

- Operator response without a defined procedure and adequate time: “The operator will notice” is not an IPL. The response must have a written procedure, a realistic time window, and a demonstrated success rate.

- Training, PPE, or administrative controls alone: These reduce exposure or improve awareness, but they lack the specificity and quantifiable dependability LOPA requires.

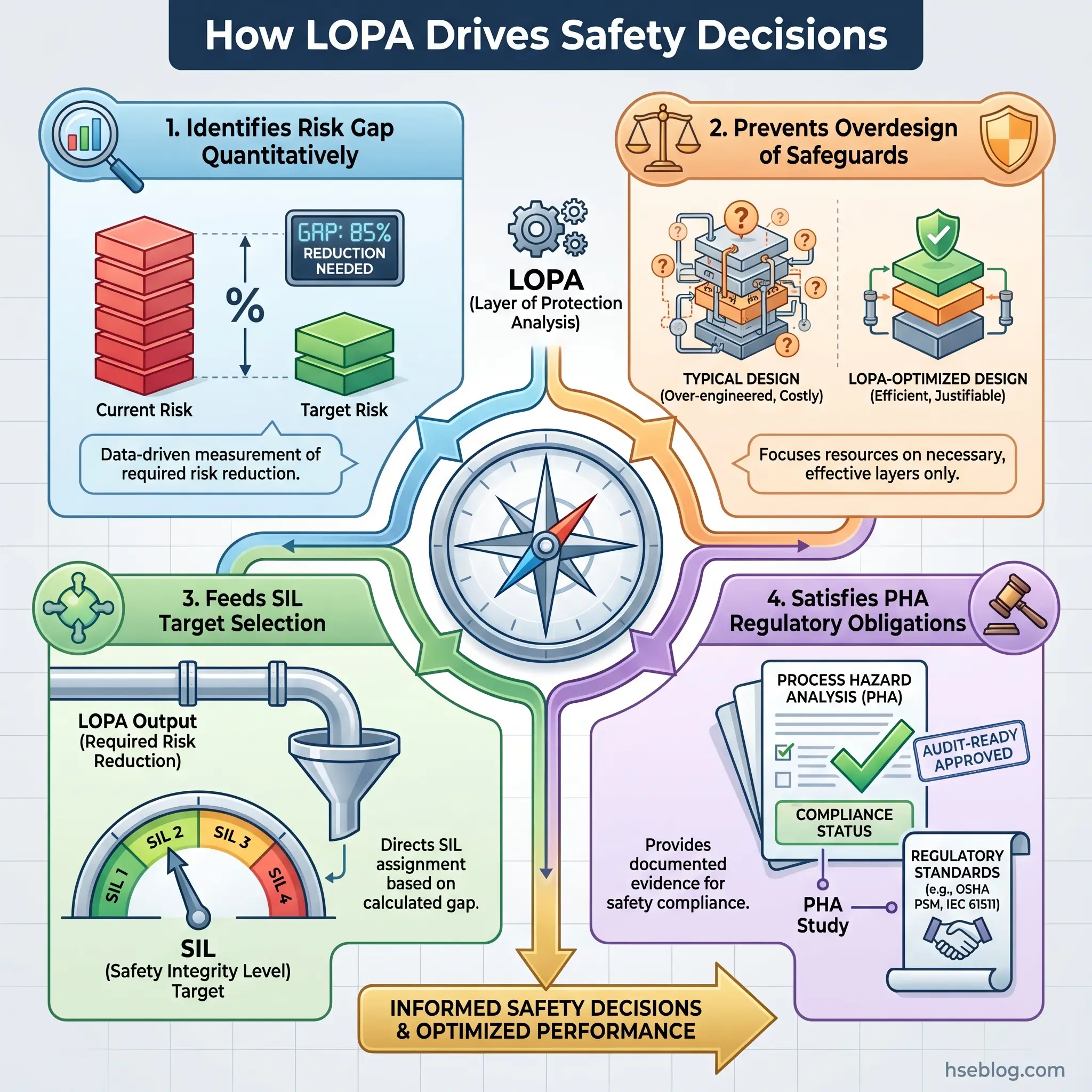

How a Basic LOPA Study Works Step by Step

The LOPA workflow follows a logical sequence that mirrors how risk actually propagates through a process system. Each step builds on the previous one, and skipping or rushing any step weakens the entire analysis. OSHA PSM under 29 CFR 1910.119(e)(4) requires that the PHA team include both process expertise and methodology expertise — LOPA is no different. Run it with a multidisciplinary team, not a single engineer filling in a spreadsheet alone.

Step 1 — Select a Scenario and Define the Consequence

LOPA evaluates one initiating cause paired with one consequence. This is the most fundamental discipline of the method. “Reactor overpressure” is not a LOPA scenario — it’s a hazard. A LOPA scenario reads: Loss of cooling water to Reactor R-201 (initiating cause) leading to thermal runaway and vessel rupture (consequence). Define the consequence clearly: fatality, serious injury, environmental release above a threshold, or major property damage. The severity category determines which risk tolerance criterion applies.

Step 2 — Estimate Initiating Event Frequency

Assign a frequency to the initiating event, expressed per year. Sources include plant-specific historical data, industry failure rate databases, engineering judgment informed by equipment type and operating conditions, or published generic data. For a cooling water pump failure, a reasonable generic frequency might be 1 × 10⁻¹ per year (once per ten years). The key discipline here is honesty: these are order-of-magnitude estimates, not precise calculations. Treating a rough estimate as a precise number creates false confidence in the final result.

Step 3 — Identify IPLs and Their Effectiveness

List every safeguard between the initiating event and the consequence. Then apply the four IPL qualification criteria from the previous section to each one. Only those that pass all four criteria earn LOPA credit. For each credited IPL, assign a Probability of Failure on Demand — the likelihood it fails to perform when needed. A typical SIS might carry a PFD of 10⁻² (SIL 1); a relief valve might carry 10⁻². These values come from reliability data, site test records, and standards guidance.

Watch For: The most common mistake in early LOPA sessions is double-counting. If the BPCS failure is the initiating event, the high-temperature alarm driven by the same BPCS cannot be credited as an IPL. I’ve stopped sessions to redraw the logic diagram on a whiteboard when teams couldn’t see the shared dependency until it was mapped visually.

Step 4 — Apply Conditional Modifiers

Not every initiating event leads to the defined consequence with certainty. Conditional modifiers adjust the frequency to account for conditions that must also be true. Common modifiers include probability of ignition (if the consequence requires a fire or explosion), probability of a specific wind direction (for toxic release scenarios), occupancy factor (whether personnel are present in the affected area), and probability of a specific process state (if the hazard only exists during certain operating phases). During batch changeover in my facility, occupancy in the reactor bay drops to near zero for a 45-minute window — that occupancy modifier meaningfully changes the risk picture for scenarios in that time frame.

Step 5 — Compare Against Target Risk

Multiply the initiating event frequency by each conditional modifier, then multiply by the PFD of each credited IPL. The result is the mitigated event frequency — the estimated frequency of the consequence occurring despite all credited layers. Compare this against the company’s risk tolerance criteria (often expressed as a maximum tolerable frequency per year for a given consequence severity, or framed within an ALARP framework). If the mitigated frequency exceeds the target, the “risk gap” tells you exactly how much additional risk reduction is needed — often expressed as the required SIL for a new safety instrumented function.

A Simple LOPA Example for Beginners

Working through a concrete example makes the abstract framework tangible. Consider a simplified tank overfill scenario in a solvent storage area.

Scenario: Level control system on Tank T-401 fails high (initiating cause), leading to solvent overfill, ground-level pool, ignition, and pool fire (consequence) with potential for serious injury.

| LOPA Element | Value | Basis |

|---|---|---|

| Initiating event | BPCS level control failure | — |

| Initiating event frequency | 1 × 10⁻¹ /year | Generic instrument failure rate |

| IPL 1: Independent high-level alarm + operator response | PFD = 1 × 10⁻¹ | Tested alarm, written procedure, adequate response time |

| IPL 2: SIS high-high level trip closing inlet valve | PFD = 1 × 10⁻² | SIL 1 rated, proof-tested annually |

| Conditional modifier: Probability of ignition | 0.1 | Open-air storage, limited ignition sources |

| Mitigated event frequency | 1 × 10⁻¹ × 10⁻¹ × 10⁻² × 0.1 = 1 × 10⁻⁵ /year | — |

| Risk tolerance criterion | 1 × 10⁻⁵ /year | Company standard for serious injury |

| Decision | Meets target — no additional IPL required | — |

The multiplication logic is straightforward: each IPL and each conditional modifier reduces the frequency by its credited factor. If the mitigated event frequency had landed at 1 × 10⁻⁴ — one order of magnitude above the target — the LOPA would conclude that an additional IPL providing at least 10⁻¹ risk reduction is needed, or an existing IPL must be upgraded.

Audit Point: When reviewing a completed LOPA worksheet, check that no two credited IPLs share a sensor, logic solver, or final element. Then verify that each IPL’s claimed PFD matches the actual proof-test interval and test records. Worksheets that look clean on paper fall apart when the testing data doesn’t support the numbers.

When Should You Use LOPA?

LOPA fits a specific range of process safety decisions. Applying it to the right problems maximizes its value; forcing it onto the wrong problems produces misleading results.

LOPA is most effective for:

- Post-HAZOP scenario deep dives: When a HAZOP team flags a scenario with high consequences but is uncertain whether existing safeguards are sufficient, LOPA provides the structured evaluation. This is LOPA’s most common application.

- SIL selection for Safety Instrumented Functions: The risk gap identified by LOPA directly informs the required SIL target under the functional safety lifecycle described in IEC 61511 and the ISA-84 series.

- Management of Change reviews: When a process modification alters initiating event frequencies or affects existing protection layers, LOPA re-evaluates whether the risk picture has changed.

- PHA revalidation: OSHA PSM requires PHA revalidation at least every five years for covered processes. LOPA can screen previously analyzed scenarios to confirm safeguard adequacy or identify degradation.

- Prioritizing risk reduction investments: When budgets are limited, LOPA identifies which scenarios have genuine risk gaps and which are already adequately protected — directing capital where it actually reduces risk.

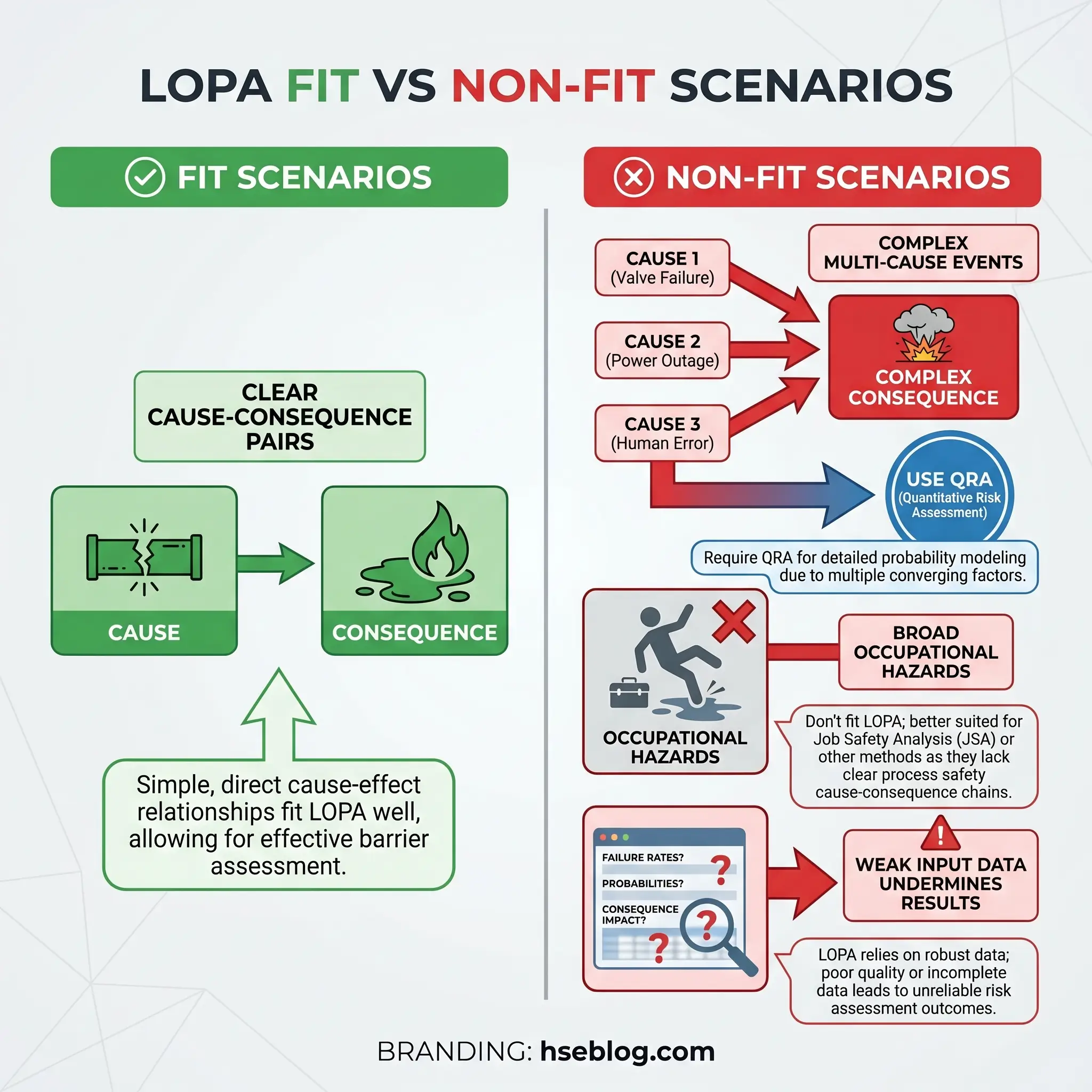

When LOPA Is Not the Right Tool

One of the most practical skills in process safety is knowing when a tool doesn’t fit. LOPA has clear boundaries, and pushing it beyond them produces answers that look precise but aren’t defensible.

| Use LOPA When | Don’t Use LOPA When |

|---|---|

| Hazard mechanism is well understood | Hazard mechanism is poorly characterized or novel |

| Cause–consequence relationship is clear | Multiple interacting causes with complex dependencies |

| IPLs can be identified and their PFD estimated | No credible protection layers exist to evaluate |

| Order-of-magnitude precision is sufficient | Decision requires detailed consequence/frequency modeling |

| Scenario fits the cause → layers → consequence framework | Broad occupational safety issues (slips, ergonomics) |

LOPA is not a replacement for HAZOP — it requires HAZOP (or equivalent PHA) to have already identified the scenarios. It is not a full QRA — it doesn’t model dispersion, blast overpressure contours, or dose-response relationships. And critically, LOPA is only as good as its inputs. When I reviewed a LOPA study from a sister facility that used an initiating event frequency of 10⁻⁴ for a scenario that actually occurred twice in five years, the entire analysis collapsed. Weak inputs create weak outputs, regardless of how correctly the multiplication is performed.

Common Beginner Mistakes in LOPA

After facilitating and reviewing LOPA sessions across multiple batch and continuous process units, certain mistakes appear with predictable regularity. Recognizing them early saves hours of rework and prevents flawed risk decisions.

- Counting safeguards that aren’t independent: The BPCS control loop and the BPCS-driven alarm share the same logic solver. Crediting both as separate IPLs is the single most frequent error. If one fails, both fail.

- Using vague scenario definitions: “Loss of containment from the reactor” is not a scenario — it’s a category. Specify the initiating cause, the specific equipment, the failure mechanism, and the defined consequence.

- Treating estimated frequencies as precise data: Writing 2.7 × 10⁻³ per year implies a precision that order-of-magnitude data doesn’t support. Stick to powers of ten unless site-specific data genuinely justifies finer resolution.

- Ignoring human factors in operator-response IPLs: Crediting an alarm-and-response IPL at PFD 10⁻¹ assumes the operator is available, trained, not fatigued, not distracted by simultaneous alarms, and has enough time to act. During a night shift with a single operator covering three process areas, that assumption deserves scrutiny.

- Skipping auditability checks: An IPL that was proof-tested once three years ago does not carry the same PFD as one tested quarterly. If the maintenance records don’t support the claimed reliability, the credit isn’t defensible.

The Fix That Works: Before finalizing any LOPA worksheet, draw a simple dependency map showing the initiating event, each credited IPL, and every shared element — sensors, logic solvers, power supplies, and human responders. If any two lines connect to the same box, one of those IPLs loses its credit.

How LOPA Supports SIL Selection and Functional Safety

When a LOPA identifies a risk gap — the mitigated event frequency exceeds the tolerable threshold even after crediting all existing IPLs — the gap quantifies how much additional risk reduction is needed. If that additional reduction will be provided by a Safety Instrumented Function, the magnitude of the gap determines the SIL target.

A risk gap of one order of magnitude (10⁻¹) corresponds to SIL 1. Two orders of magnitude (10⁻²) points to SIL 2. Three (10⁻³) points to SIL 3. This direct linkage between LOPA output and SIL requirement is one of the method’s most valuable practical applications.

IEC 61511 governs the lifecycle requirements for Safety Instrumented Systems in the process industry sector, from concept through decommissioning. The 2016 edition with its 2017 amendment remains the current core standard — the IEC’s 2026 series package still references these same documents, confirming that the practical standards base has remained stable rather than being replaced by a newer edition. The ISA-84 series and ISA-TR84.00.02-2022 provide practical implementation guidance for applying HAZOP, LOPA, and fault tree methods within functional safety work.

It’s worth noting that SIL selection (determining the target) and SIL verification (confirming the SIF design achieves the target PFD) are separate activities. LOPA handles the first. Verification requires detailed reliability modeling of the SIF architecture — a different discipline entirely.

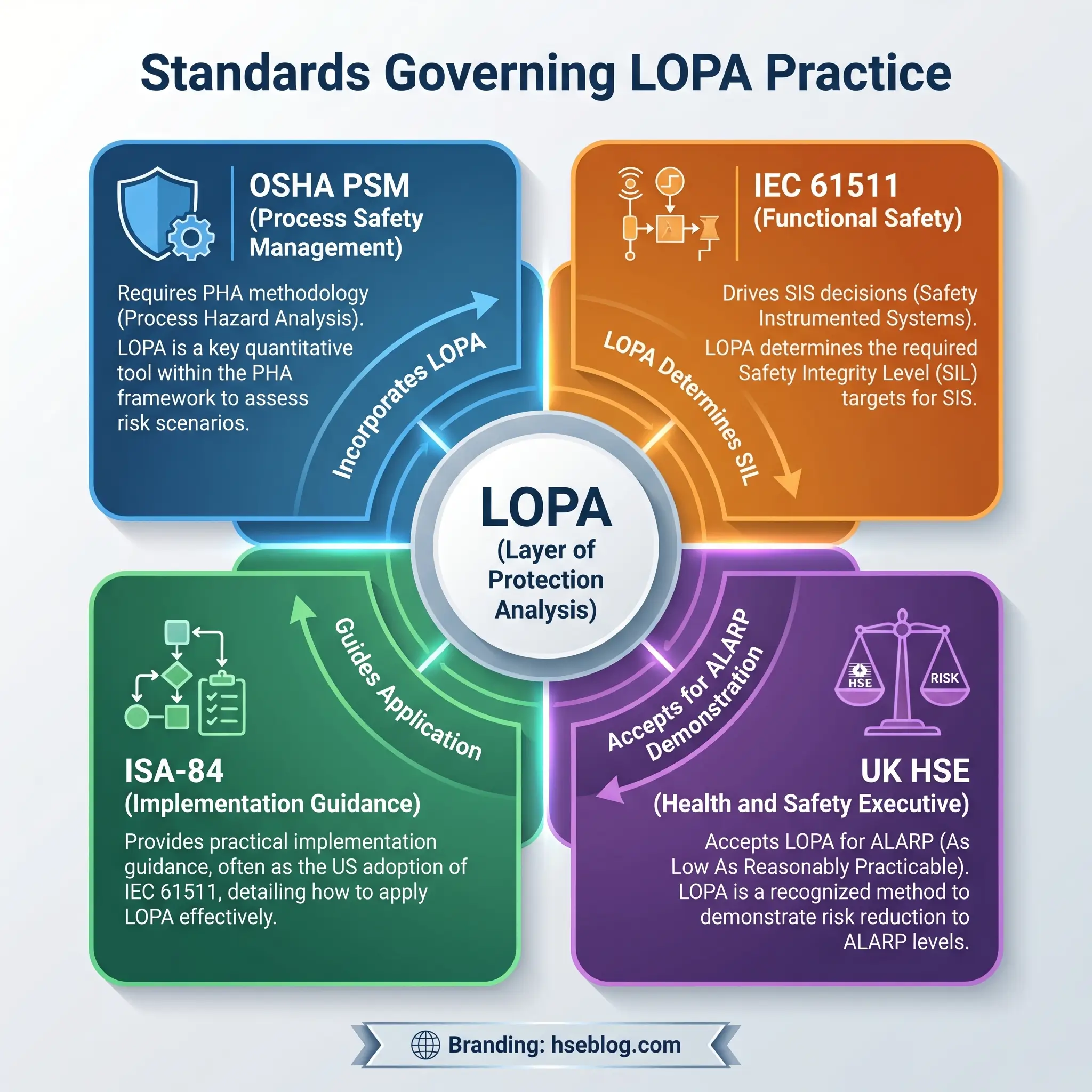

What Standards and Regulations Matter for LOPA?

LOPA is not itself a legally mandated method in most jurisdictions. Rather, it’s a widely accepted tool used to satisfy or strengthen broader process hazard analysis and functional safety obligations. Understanding which standards apply — and how they connect — prevents both regulatory overclaiming and compliance gaps.

| Standard | Relevance to LOPA |

|---|---|

| OSHA 29 CFR 1910.119(e) | Requires initial PHA, allows “appropriate equivalent methodology,” mandates safeguard evaluation and revalidation every 5 years |

| IEC 61511:2016 + AMD1:2017 | Core SIS lifecycle requirements; LOPA commonly used to determine risk reduction needs and SIF necessity |

| ISA-84 / ISA-TR84.00.02-2022 | Practical guidance for implementing functional safety using HAZOP, LOPA, and fault tree techniques |

| UK HSE ALARP guidance | Accepts LOPA-based strength-in-depth arguments in major-hazard ALARP decisions |

OSHA’s PSM standard under 29 CFR 1910.119(e)(2)(vii) allows the use of “an appropriate equivalent methodology” for process hazard analysis. LOPA is widely accepted as such a methodology when used to deepen selected HAZOP scenarios. The standard also requires PHA teams to include both process expertise and methodology expertise — a requirement that applies directly to LOPA facilitation.

In UK major-hazard contexts, HSE guidance continues to reference LOPA within ALARP decision-making, reinforcing that the method remains active in contemporary risk-justification practice rather than being a legacy approach. For international readers, this means LOPA has regulatory recognition across both prescriptive (OSHA) and goal-setting (UK HSE) regulatory frameworks.

Frequently Asked Questions About LOPA

The most persistent lesson from years of facilitating LOPA sessions in batch chemical operations is that the method’s value doesn’t come from the multiplication — any spreadsheet handles that. It comes from the discipline of forcing teams to answer uncomfortable questions honestly. Is that alarm truly independent from the control system that failed? Does the operator genuinely have enough time to respond during a night shift? When was the last proof test on that SIF, and did it actually pass?

Teams that treat LOPA as a paperwork exercise — filling cells to get a number below the line — produce worksheets that satisfy audits but miss real risk. The studies that prevent incidents are the ones where someone stops the session and says, “Wait, that safeguard shares the same transmitter as the initiating cause.” That single observation, mapped on a whiteboard in a stuffy conference room, is worth more than a hundred perfectly formatted worksheets. LOPA works when the team behind it is willing to challenge assumptions, verify data, and accept that some scenarios need more protection than the current design provides.

The SAFEChE LOPA tutorial from the University of Michigan offers an accessible visual walkthrough for practitioners who want to see the method applied in an academic setting before running their first live study.